flowchart LR

subgraph Model["Model Space ℝᴹ"]

RS["Row Space<br/>𝓡(Aᵀ)<br/>dim = r"]

NS["Null Space<br/>𝓝(A)<br/>dim = M−r"]

end

subgraph Data["Data Space ℝᴺ"]

CS["Column Space<br/>𝓡(A)<br/>dim = r"]

LNS["Left Null Space<br/>𝓝(Aᵀ)<br/>dim = N−r"]

end

RS -->|"A"| CS

CS -->|"Aᵀ"| RS

NS -.->|"A → 0"| LNS

Inverse Problems

From Forward Modeling to Regularized Inversion in Exploration Seismology

School of Earth & Atmospheric Sciences — Georgia Institute of Technology

2026-02-16

Inverse Problems

From Forward Modeling to Regularized Inversion in Exploration Seismology

Felix J. Herrmann

School of Earth & Atmospheric Sciences — Georgia Institute of Technology

Outline

Part I — Foundations

- Forward & inverse problems

- Linear forward problem

- Strict inverse for invertible systems

- When the inverse does not exist

Part II — Least-Squares Inversion

- Least-squares formulation

- Normal equations

- Non-uniqueness & minimum-norm solutions

Part III — SVD & Generalized Inverses

- Singular Value Decomposition

- Fundamental subspaces

- Pseudo-inverse & four cases

Part IV — Regularization

- Condition number & ill-posedness

- Truncated SVD

- Tikhonov regularization

- Bayesian interpretation

Part V — Application

- Deconvolution as an inverse problem

- Numerical examples in Julia

Part I — Foundations

The Forward Problem

In exploration seismology, we relate model parameters \(\mathbf{m}\) (e.g., velocity, density, reflectivity) to observed data \(\mathbf{d}\) (e.g., seismograms) through a forward operator:

\[ \mathbf{d} = F(\mathbf{m}) \]

where \(F : \mathbb{R}^M \to \mathbb{R}^N\) maps from model space to data space.

Key distinction

The forward problem — given \(\mathbf{m}\), compute \(\mathbf{d}\) — is typically well-posed. The inverse problem — given \(\mathbf{d}\), recover \(\mathbf{m}\) — is typically ill-posed.

Forward Problem — Examples

| Forward Operator | Model \(\mathbf{m}\) | Data \(\mathbf{d}\) |

|---|---|---|

| Wave equation | Velocity field | Seismograms |

| Born approximation | Reflectivity | linearized data |

| Convolution | Reflectivity series | Seismic trace |

All share the common mathematical structure \(\mathbf{d} = F(\mathbf{m})\).

The Linear Forward Problem

When \(F\) is linear, we write

\[ \mathbf{d} = \mathbf{A}\,\mathbf{m} \]

where \(\mathbf{A} \in \mathbb{R}^{N \times M}\) is the forward modeling matrix.

Linearity means:

- \(F(\alpha\,\mathbf{m}_1 + \beta\,\mathbf{m}_2) = \alpha\,F(\mathbf{m}_1) + \beta\,F(\mathbf{m}_2)\)

Linearization of nonlinear problems:

\[ F(\mathbf{m}_0 + \delta\mathbf{m}) \approx F(\mathbf{m}_0) + \underbrace{\frac{\partial F}{\partial \mathbf{m}}\bigg|_{\mathbf{m}_0}}_{\mathbf{A}}\,\delta\mathbf{m} \]

When \(F(\mathbf{m}\) is the solution of the wave equation, this is the

- single-scattering Born approximation — the Jacobian \(\mathbf{A}\) is the Fréchet derivative.

- The vector \(\mathbf{m}_0\) is a smooth (non-reflecting) background-velocity model.

Forward & Inverse: Nonlinear vs. Linear

Nonlinear forward

\[\mathbf{d} = F(\mathbf{m})\]

Nonlinear inverse

\[\mathbf{m} = F^{-1}(\mathbf{d})\]

Solved iteratively — e.g., Gauss–Newton, gradient descent.

Linear forward

\[\mathbf{d} = \mathbf{A}\,\mathbf{m}\]

Linear inverse

\[\mathbf{m} = \mathbf{A}^{-1}\,\mathbf{d}\]

But does \(\mathbf{A}^{-1}\) exist?

Warning

Even when \(\mathbf{A}^{-1}\) exists, computing it may be numerically unstable.

The Strict Inverse

If \(\mathbf{A}\) is square (\(N = M\)) and invertible (\(\det(\mathbf{A}) \neq 0\)), the exact inverse exists:

\[ \mathbf{m} = \mathbf{A}^{-1}\,\mathbf{d} \]

Properties:

- \(\mathbf{A}\,\mathbf{A}^{-1} = \mathbf{A}^{-1}\,\mathbf{A} = \mathbf{I}\)

- Unique solution for every \(\mathbf{d}\)

Orthonormal matrices satisfy \(\mathbf{A}^\top\mathbf{A} = \mathbf{A}\,\mathbf{A}^\top = \mathbf{I}\), so the adjoint is the inverse.

In practice, this situation is rare because:

- \(N \neq M\) (more data than unknowns or vice versa)

- \(\mathbf{A}\) may be rank-deficient

- Noise contaminates the data

When Does the Inverse Not Exist?

Three scenarios arise:

1. Overdetermined (\(N > M\)): more equations than unknowns — no exact solution in general.

2. Underdetermined (\(N < M\)): fewer equations than unknowns — infinitely many solutions.

3. Rank-deficient (\(\text{rank}(\mathbf{A}) < \min(N,M)\)): even with \(N = M\), rows/columns are linearly dependent.

Tip

In seismology, we almost always face some combination of these — the inverse problem is ill-posed.

Part II — Least-Squares Inversion

Least-Squares Formulation

When no exact solution exists, we seek the model that best fits the data in a least-squares sense:

\[ \min_{\mathbf{m}} \;\; J(\mathbf{m}) = \frac{1}{2}\|\mathbf{A}\,\mathbf{m} - \mathbf{d}\|_2^2 \]

Expanding:

\[ J(\mathbf{m}) = \frac{1}{2}\left(\mathbf{A}\,\mathbf{m} - \mathbf{d}\right)^\top\left(\mathbf{A}\,\mathbf{m} - \mathbf{d}\right) \]

\[ = \frac{1}{2}\left(\mathbf{m}^\top\mathbf{A}^\top\mathbf{A}\,\mathbf{m} - 2\,\mathbf{m}^\top\mathbf{A}^\top\mathbf{d} + \mathbf{d}^\top\mathbf{d}\right) \]

Normal Equations

Setting the gradient to zero:

\[ \nabla_{\mathbf{m}} J = \mathbf{A}^\top\mathbf{A}\,\mathbf{m} - \mathbf{A}^\top\mathbf{d} = \mathbf{0} \]

yields the normal equations:

\[ \boxed{\mathbf{A}^\top\mathbf{A}\,\mathbf{m} = \mathbf{A}^\top\mathbf{d}} \]

If \(\mathbf{A}^\top\mathbf{A}\) is invertible, the least-squares solution is:

\[ \hat{\mathbf{m}}_{\text{LS}} = \left(\mathbf{A}^\top\mathbf{A}\right)^{-1}\mathbf{A}^\top\,\mathbf{d} \]

Note

\(\mathbf{A}^\top\) is the transpose of the matrix \(\mathbf{A}\). \(\mathbf{A}^\top\mathbf{A}\) is the Gram matrix — symmetric positive semi-definite, and positive definite iff \(\mathbf{A}\) has full column rank.

Transpose and Adjoint

The adjoint (or transpose for real-valued matrices) \(\mathbf{A}^H\) satisfies:

\[ \langle \mathbf{A}\,\mathbf{m},\, \mathbf{d} \rangle_{\mathbb{R}^N} = \langle \mathbf{m},\, \mathbf{A}^H\mathbf{d} \rangle_{\mathbb{R}^M} \]

Physical interpretation in seismology:

- \(\mathbf{A}\) = forward modeling (sources → receivers)

- \(\mathbf{A}^\top\) = migration / backprojection (receivers → model)

Unitary matrices satisfy \(\mathbf{A}^H\mathbf{A} = \mathbf{A}\,\mathbf{A}^H = \mathbf{I}\), so the adjoint is the inverse.

Example: the DFT matrix (up to a scaling factor).

Non-Uniqueness

A solution is non-unique when there exist distinct models \(\mathbf{m}_1 \neq \mathbf{m}_2\) such that

\[ \mathbf{A}\,\mathbf{m}_1 = \mathbf{A}\,\mathbf{m}_2 = \mathbf{d} \]

Equivalently, \(\mathbf{A}(\mathbf{m}_1 - \mathbf{m}_2) = \mathbf{0}\), i.e., the null space \(\mathcal{N}(\mathbf{A})\) is nontrivial.

If \(\hat{\mathbf{m}}\) is any solution, then \(\hat{\mathbf{m}} + \mathbf{m}_0\) is also a solution for any \(\mathbf{m}_0 \in \mathcal{N}(\mathbf{A})\).

Important

Non-uniqueness is fundamental in seismology — limited acquisition geometry means parts of the model are invisible to the data.

Minimum-Norm Solution

Among all solutions, select the one with smallest norm:

\[ \min_{\mathbf{m}} \;\; \|\mathbf{m}\|_2^2 \quad \text{subject to} \quad \mathbf{A}\,\mathbf{m} = \mathbf{d} \]

Using Lagrange multipliers, the minimum-norm solution is:

\[ \hat{\mathbf{m}}_{\text{MN}} = \mathbf{A}^\top\left(\mathbf{A}\,\mathbf{A}^\top\right)^{-1}\mathbf{d} \]

This solution:

- Lives entirely in the row space of \(\mathbf{A}\)

- Has no component in the null space

- Is unique among all solutions

Any vector \(\mathbf{m} \in \mathbb{R}^M\) can be decomposed uniquely using the orthogonal decomposition of the model space:

\[\mathbf{m} = \underbrace{\mathbf{m}_r}_{\in \mathcal{R}(\mathbf{A}^\top)} + \underbrace{\mathbf{m}_0}_{\in \mathcal{N}(\mathbf{A})}\]

where

\(\mathcal{R}(\mathbf{A})\) denotes the range (or column space) of \(\mathbf{A}\) — the set of all vectors that can be written as \(\mathbf{A}\mathbf{m}\) for some \(\mathbf{m}\).

\(\mathcal{N}(\mathbf{A})\) is the null space (or kernel) of \(\mathbf{A}\) — the set of all vectors that \(\mathbf{A}\) maps to zero:

\[\mathcal{N}(\mathbf{A}) = \{\mathbf{m} \in \mathbb{R}^M : \mathbf{A}\mathbf{m} = \mathbf{0}\}\]

These are the model components that produce no data whatsoever. In seismology, think of structure in the subsurface that your acquisition geometry simply cannot see — it’s there, but it generates no response at the receivers.

The notation parallels \(\mathcal{R}\): where \(\mathcal{R}(\mathbf{A})\) collects everything \(\mathbf{A}\) can produce, \(\mathcal{N}(\mathbf{A})\) collects everything \(\mathbf{A}\) annihilates.

Since \(\mathbf{A}\mathbf{m}_0 = \mathbf{0}\) by definition of the null space, the constraint \(\mathbf{A}\mathbf{m} = \mathbf{d}\) only sees the row-space component:

\[\mathbf{A}\mathbf{m} = \mathbf{A}\mathbf{m}_r + \mathbf{A}\mathbf{m}_0 = \mathbf{A}\mathbf{m}_r = \mathbf{d}\]

So the null-space component \(\mathbf{m}_0\) is invisible to the data — it’s a free parameter. Now look at what it does to the norm. Because the two components are orthogonal:

\[\|\mathbf{m}\|_2^2 = \|\mathbf{m}_r\|_2^2 + \|\mathbf{m}_0\|_2^2\]

The first term is fixed (determined by the constraint), and the second term is non-negative. The minimum is achieved when \(\mathbf{m}_0 = \mathbf{0}\), which means the solution lives entirely in \(\mathcal{R}(\mathbf{A}^\top)\).

You can also see this directly from the solution formula \(\hat{\mathbf{m}} = \mathbf{A}^\top(\mathbf{A}\mathbf{A}^\top)^{-1}\mathbf{d}\). The leading \(\mathbf{A}^\top\) guarantees the result is a linear combination of rows of \(\mathbf{A}\), i.e., it lives in the row space by construction.

Part III — SVD & Generalized Inverses

Singular Value Decomposition (SVD)

Any matrix \(\mathbf{A} \in \mathbb{R}^{N \times M}\) admits the factorization:

\[ \mathbf{A} = \mathbf{U}\,\boldsymbol{\Sigma}\,\mathbf{V}^\top \]

where:

- \(\mathbf{U} \in \mathbb{R}^{N \times N}\): orthonormal columns = left singular vectors (data space)

- \(\mathbf{V} \in \mathbb{R}^{M \times M}\): orthonormal columns = right singular vectors (model space)

- \(\boldsymbol{\Sigma} \in \mathbb{R}^{N \times M}\): diagonal with singular values \(\sigma_1 \geq \sigma_2 \geq \cdots \geq \sigma_r > 0\)

- \(r = \text{rank}(\mathbf{A})\)

SVD — Compact Form

Writing only the nonzero singular values:

\[ \mathbf{A} = \sum_{i=1}^{r} \sigma_i\,\mathbf{u}_i\,\mathbf{v}_i^\top \]

This is an outer-product expansion — each term is a rank-1 matrix weighted by \(\sigma_i\).

Computational note

We rarely form \(\mathbf{A}\) explicitly in seismology. We work with \(\mathbf{A}\,\mathbf{v}\) (forward) and \(\mathbf{A}^\top\mathbf{u}\) (adjoint/migration) as matrix-free operations.

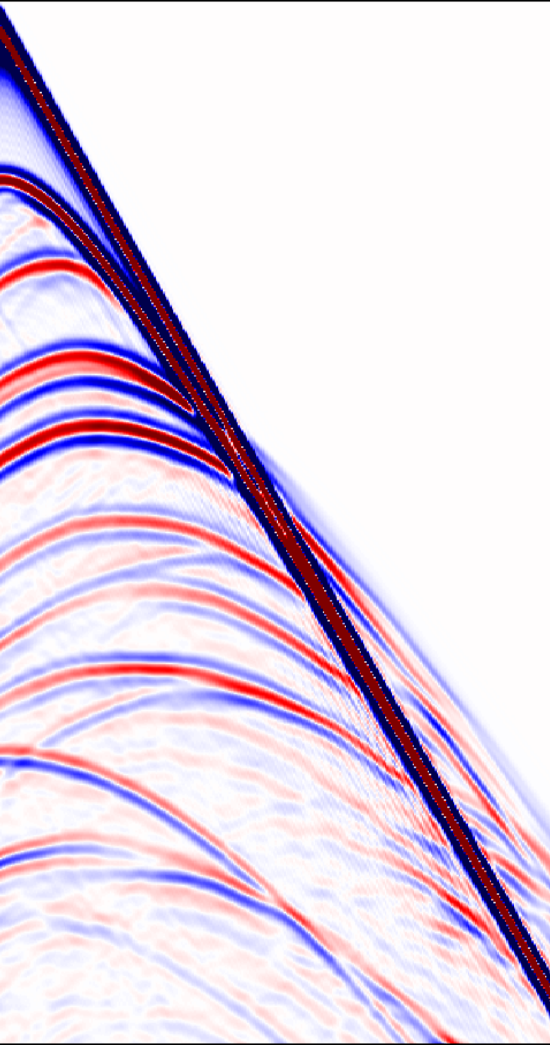

The Four Fundamental Subspaces

The SVD reveals the four fundamental subspaces of \(\mathbf{A}\) (Strang):

| Subspace | Basis | Dimension |

|---|---|---|

| Column space \(\mathcal{R}(\mathbf{A})\) | \(\{\mathbf{u}_1, \ldots, \mathbf{u}_r\}\) | \(r\) |

| Left null space \(\mathcal{N}(\mathbf{A}^\top)\) | \(\{\mathbf{u}_{r+1}, \ldots, \mathbf{u}_N\}\) | \(N - r\) |

| Row space \(\mathcal{R}(\mathbf{A}^\top)\) | \(\{\mathbf{v}_1, \ldots, \mathbf{v}_r\}\) | \(r\) |

| Null space \(\mathcal{N}(\mathbf{A})\) | \(\{\mathbf{v}_{r+1}, \ldots, \mathbf{v}_M\}\) | \(M - r\) |

Orthogonal decompositions:

\[ \mathbb{R}^N = \mathcal{R}(\mathbf{A}) \oplus \mathcal{N}(\mathbf{A}^\top), \qquad \mathbb{R}^M = \mathcal{R}(\mathbf{A}^\top) \oplus \mathcal{N}(\mathbf{A}) \]

The Fundamental Theorem of Linear Algebra

The Moore–Penrose Pseudo-Inverse

The pseudo-inverse \(\mathbf{A}^{\dagger}\) is defined via the SVD:

\[ \mathbf{A}^{\dagger} = \mathbf{V}\,\boldsymbol{\Sigma}^{\dagger}\,\mathbf{U}^\top \]

where \(\boldsymbol{\Sigma}^{\dagger}\) inverts the nonzero singular values: \(\sigma_i^{\dagger} = 1/\sigma_i\) for \(i = 1,\ldots,r\) and zero otherwise.

. . . \(\mathcal{N}(\mathbf{A})\) is the null space (or kernel) of \(\mathbf{A}\) — the set of all vectors that \(\mathbf{A}\) maps to zero:

\[\mathcal{N}(\mathbf{A}) = \{\mathbf{m} \in \mathbb{R}^M : \mathbf{A}\mathbf{m} = \mathbf{0}\}\]

These are the model components that produce no data whatsoever. In seismology, think of structure in the subsurface that your acquisition geometry simply cannot see — it’s there, but it generates no response at the receivers.

The notation parallels \(\mathcal{R}\): where \(\mathcal{R}(\mathbf{A})\) collects everything \(\mathbf{A}\) can produce, \(\mathcal{N}(\mathbf{A})\) collects everything \(\mathbf{A}\) annihilates. Some texts write \(\text{Ker}(\mathbf{A})\) instead (from the German Kern). Explicitly:

\[ \mathbf{A}^{\dagger} = \sum_{i=1}^{r} \frac{1}{\sigma_i}\,\mathbf{v}_i\,\mathbf{u}_i^\top \]

Four Cases of the Pseudo-Inverse

| Case | Condition | Pseudo-inverse | Solution |

|---|---|---|---|

| Square, full rank | \(N = M = r\) | \(\mathbf{A}^{\dagger} = \mathbf{A}^{-1}\) | Exact |

| Overdetermined, full col. rank | \(N > M = r\) | \(\mathbf{A}^{\dagger} = (\mathbf{A}^\top\mathbf{A})^{-1}\mathbf{A}^\top\) | Least-squares |

| Underdetermined, full row rank | \(N < M,\; N = r\) | \(\mathbf{A}^{\dagger} = \mathbf{A}^\top(\mathbf{A}\mathbf{A}^\top)^{-1}\) | Minimum-norm |

| Rank-deficient | \(r < \min(N,M)\) | \(\mathbf{A}^{\dagger} = \mathbf{V}_r\boldsymbol{\Sigma}_r^{-1}\mathbf{U}_r^\top\) | Min-norm LS |

The pseudo-inverse always gives the minimum-norm least-squares solution:

\[\hat{\mathbf{m}} = \mathbf{A}^{\dagger}\mathbf{d} = \arg\min_{\mathbf{m}} \|\mathbf{m}\|_2 \quad \text{s.t.} \quad \|\mathbf{A}\mathbf{m} - \mathbf{d}\|_2 = \min\]

Over- & Underdetermined Systems

Overdetermined (\(N > M\))

- More data than unknowns

- Noise-free: may be inconsistent

- With noise: least-squares solution

\[ \hat{\mathbf{m}} = (\mathbf{A}^\top\mathbf{A})^{-1}\mathbf{A}^\top\mathbf{d} \]

Seismic example: least-squares migration.

Underdetermined (\(N < M\))

- Fewer data than unknowns

- Infinitely many solutions

- Minimum-norm solution

\[ \hat{\mathbf{m}} = \mathbf{A}^\top(\mathbf{A}\mathbf{A}^\top)^{-1}\mathbf{d} \]

Seismic example: Wavefield reconstruction from undersampled data.

Part IV — Regularization

Condition Number & Ill-Posedness

The condition number of \(\mathbf{A}\) is:

\[ \kappa(\mathbf{A}) = \frac{\sigma_1}{\sigma_r} \]

- \(\kappa \approx 1\): well-conditioned — small data perturbations cause small model perturbations

- \(\kappa \gg 1\): ill-conditioned — small data errors get amplified

Hadamard’s conditions for well-posedness:

- A solution exists

- The solution is unique

- The solution depends continuously on the data

Most seismic inverse problems violate conditions 2 and/or 3.

Why Seismic Inversion Is Ill-Posed

Limited bandwidth — seismic sources have bandpass character:

- Missing low frequencies → non-uniqueness in long wavelengths

- Missing high frequencies → limited resolution

Limited aperture — finite acquisition geometry:

- Illumination gaps in the subsurface

- Null-space components invisible to the data

Noise — ambient, coherent, and acquisition noise:

- Amplified through inversion of small singular values

Effect of Noise on Inversion

With noisy data \(\mathbf{d} = \mathbf{A}\mathbf{m}_{\text{true}} + \mathbf{n}\), the naive pseudo-inverse gives:

\[ \hat{\mathbf{m}} = \mathbf{A}^{\dagger}\mathbf{d} = \sum_{i=1}^{r}\frac{\mathbf{u}_i^\top\mathbf{d}}{\sigma_i}\,\mathbf{v}_i \]

\[ = \underbrace{\sum_{i=1}^{r}\frac{\mathbf{u}_i^\top\mathbf{A}\mathbf{m}_{\text{true}}}{\sigma_i}\mathbf{v}_i}_{\text{signal}} + \underbrace{\sum_{i=1}^{r}\frac{\mathbf{u}_i^\top\mathbf{n}}{\sigma_i}\mathbf{v}_i}_{\text{amplified noise}} \]

For small \(\sigma_i\), the noise terms \(\mathbf{u}_i^\top\mathbf{n}/\sigma_i\) blow up.

Truncated SVD (TSVD)

Regularize by keeping only the \(p < r\) largest singular values:

\[ \hat{\mathbf{m}}_{\text{TSVD}} = \sum_{i=1}^{p} \frac{\mathbf{u}_i^\top\mathbf{d}}{\sigma_i}\,\mathbf{v}_i \]

Trade-off: choosing \(p\)

- \(p\) large → better resolution but more noise amplification

- \(p\) small → more stable but loss of detail

Tip

The Picard condition helps determine where to truncate: \(|\mathbf{u}_i^\top\mathbf{d}|\) should decay faster than \(\sigma_i\).

Tikhonov Regularization

Add a penalty term to the least-squares objective:

\[ \min_{\mathbf{m}} \;\; \frac{1}{2}\|\mathbf{A}\,\mathbf{m} - \mathbf{d}\|_2^2 + \frac{\lambda}{2}\|\mathbf{m}\|_2^2 \]

Setting the gradient to zero yields the damped least-squares solution:

\[ \boxed{\hat{\mathbf{m}}_{\lambda} = \left(\mathbf{A}^\top\mathbf{A} + \lambda\,\mathbf{I}\right)^{-1}\mathbf{A}^\top\,\mathbf{d}} \]

In terms of the SVD, the filter factors become:

\[ f_i = \frac{\sigma_i^2}{\sigma_i^2 + \lambda} \]

so each component is damped rather than truncated.

Tikhonov as an Augmented System

The Tikhonov objective

\[ \min_{\mathbf{m}} \;\; \frac{1}{2}\|\mathbf{A}\,\mathbf{m} - \mathbf{d}\|_2^2 + \frac{\lambda}{2}\|\mathbf{m}\|_2^2 \]

is equivalent to a single least-squares problem with an augmented system:

\[ \min_{\mathbf{m}} \;\; \left\| \begin{bmatrix} \mathbf{A} \\ \sqrt{\lambda}\,\mathbf{I} \end{bmatrix} \mathbf{m} - \begin{bmatrix} \mathbf{d} \\ \mathbf{0} \end{bmatrix} \right\|_2^2 \]

Defining \(\tilde{\mathbf{A}} = \begin{bmatrix} \mathbf{A} \\ \sqrt{\lambda}\,\mathbf{I} \end{bmatrix}\) and \(\tilde{\mathbf{d}} = \begin{bmatrix} \mathbf{d} \\ \mathbf{0} \end{bmatrix}\), verify that the normal equations \(\tilde{\mathbf{A}}^\top\tilde{\mathbf{A}}\,\mathbf{m} = \tilde{\mathbf{A}}^\top\tilde{\mathbf{d}}\) give: \(\left(\mathbf{A}^\top\mathbf{A} + \lambda\,\mathbf{I}\right)\mathbf{m} = \mathbf{A}^\top\mathbf{d}\).

Why this matters

This formulation turns regularized inversion into a standard overdetermined least-squares problem. Solvers like LSQR work directly on the augmented system — no need to form \(\mathbf{A}^\top\mathbf{A}\) explicitly. The damp parameter in LSQR is precisely \(\sqrt{\lambda}\).

Tikhonov — General Form

The generalized Tikhonov functional:

\[ \min_{\mathbf{m}} \;\; \frac{1}{2}\|\mathbf{A}\,\mathbf{m} - \mathbf{d}\|_2^2 + \frac{\lambda}{2}\|\mathbf{L}\,\mathbf{m}\|_2^2 \]

where \(\mathbf{L}\) is a regularization operator:

| \(\mathbf{L}\) | Effect |

|---|---|

| \(\mathbf{I}\) | Minimum norm (zeroth-order Tikhonov) |

| \(\mathbf{D}_1\) (first difference) | Penalize roughness — prefer flat models |

| \(\mathbf{D}_2\) (second difference) | Penalize curvature — prefer smooth models |

Corresponds to

\[ \min_{\mathbf{m}} \;\; \left\| \begin{bmatrix} \mathbf{A} \\ \sqrt{\lambda}\,\mathbf{L} \end{bmatrix} \mathbf{m} - \begin{bmatrix} \mathbf{d} \\ \mathbf{0} \end{bmatrix} \right\|_2^2 \]

Solution: \(\hat{\mathbf{m}}_{\lambda} = \left(\mathbf{A}^\top\mathbf{A} + \lambda\,\mathbf{L}^\top\mathbf{L}\right)^{-1}\mathbf{A}^\top\,\mathbf{d}\)

Regularization as a Bias–Variance Trade-off

\[ \text{MSE}(\hat{\mathbf{m}}) = \underbrace{\|\text{bias}\|^2}_{\text{increases with } \lambda} + \underbrace{\text{variance}}_{\text{decreases with } \lambda} \]

- No regularization (\(\lambda = 0\)): zero bias, high variance → unstable, noisy

- Heavy regularization (\(\lambda \to \infty\)): high bias, zero variance → oversmoothed

- Optimal \(\lambda\): balances the two

Methods for choosing \(\lambda\):

- L-curve criterion

- Discrepancy principle: \(\|\mathbf{A}\hat{\mathbf{m}} - \mathbf{d}\| \approx \|\mathbf{n}\|\)

The L-Curve Criterion

Plot \(\|\hat{\mathbf{m}}_\lambda\|_2\) vs. \(\|\mathbf{A}\hat{\mathbf{m}}_\lambda - \mathbf{d}\|_2\) on a log-log scale as \(\lambda\) varies:

The corner of the L marks the optimal \(\lambda\) — the point of maximum curvature where the trade-off between fitting the data and controlling the solution norm is most favorable.

Bayesian Interpretation

Rewrite Tikhonov regularization as maximum a posteriori (MAP) estimation:

\[ \hat{\mathbf{m}}_{\text{MAP}} = \arg\max_{\mathbf{m}} \; p(\mathbf{m} \mid \mathbf{d}) \propto p(\mathbf{d} \mid \mathbf{m})\,p(\mathbf{m}) \]

With Gaussian assumptions:

- Likelihood: \(p(\mathbf{d} \mid \mathbf{m}) \propto \exp\!\left(-\frac{1}{2}(\mathbf{d} - \mathbf{A}\mathbf{m})^\top\mathbf{C}_d^{-1}(\mathbf{d} - \mathbf{A}\mathbf{m})\right)\)

- Prior: \(p(\mathbf{m}) \propto \exp\!\left(-\frac{1}{2}\mathbf{m}^\top\mathbf{C}_m^{-1}\mathbf{m}\right)\)

Taking \(-\log\) recovers a generalized Tikhonov problem:

\[ \min_{\mathbf{m}} \;\; \frac{1}{2}\|\mathbf{C}_d^{-1/2}(\mathbf{A}\mathbf{m} - \mathbf{d})\|_2^2 + \frac{1}{2}\|\mathbf{C}_m^{-1/2}\mathbf{m}\|_2^2 \]

where \(\mathbf{C}_d\) and \(\mathbf{C}_m\) are data and model covariance matrices.

Covariance and Factored Regularization

Factor the inverse covariances:

\[ \mathbf{C}_d^{-1} = \mathbf{W}_d^\top\mathbf{W}_d, \qquad \mathbf{C}_m^{-1} = \mathbf{W}_m^\top\mathbf{W}_m \]

The MAP solution becomes:

\[ \hat{\mathbf{m}} = \left(\mathbf{A}^\top\mathbf{W}_d^\top\mathbf{W}_d\mathbf{A} + \mathbf{W}_m^\top\mathbf{W}_m\right)^{-1}\mathbf{A}^\top\mathbf{W}_d^\top\mathbf{W}_d\,\mathbf{d} \]

Connections:

| Statistical quantity | Regularization quantity |

|---|---|

| \(\mathbf{C}_d^{-1}\) | Data weighting |

| \(\mathbf{C}_m^{-1}\) | Model penalty (= \(\lambda\,\mathbf{L}^\top\mathbf{L}\)) |

| \(\mathbf{C}_m^{-1/2}\) | Square root of model precision |

Part V — Application

Deconvolution — The Setup

A sparse reflectivity \(r(t)\) convolved with a bandlimited Ricker wavelet \(w(t)\) produces the observed trace \(d(t)\). The wavelet spectrum \(|W(f)|\) shows the bandpass character — frequencies near zero and above ~60 Hz carry no signal, making inversion ill-posed.

Deconvolution as an Inverse Problem

A seismic trace is modeled as a convolution:

\[ d(t) = w(t) * r(t) + n(t) \]

- \(w(t)\): source wavelet

- \(r(t)\): reflectivity series

- \(n(t)\): noise

In matrix form:

\[ \mathbf{d} = \mathbf{W}\,\mathbf{r} + \mathbf{n} \]

where \(\mathbf{W}\) is a Toeplitz convolution matrix built from the wavelet.

Deconvolution = inversion for \(\mathbf{r}\) given \(\mathbf{d}\) and \(\mathbf{W}\).

The Toeplitz Convolution Matrix

Convolution \(d_k = \sum_j w_{k-j}\,r_j\) written as a matrix–vector product:

\[ \begin{bmatrix} d_1 \\ d_2 \\ d_3 \\ d_4 \\ d_5 \\ \vdots \end{bmatrix} = \begin{bmatrix} w_0 & 0 & 0 & \cdots \\ w_1 & w_0 & 0 & \cdots \\ w_2 & w_1 & w_0 & \cdots \\ 0 & w_2 & w_1 & \cdots \\ 0 & 0 & w_2 & \cdots \\ \vdots & \vdots & \vdots & \ddots \end{bmatrix} \begin{bmatrix} r_1 \\ r_2 \\ r_3 \\ \vdots \end{bmatrix} \]

Why Toeplitz? Because the system is time-invariant — shifting \(r\) by one sample shifts \(d\) by one sample. Each column of \(\mathbf{W}\) is the wavelet, shifted down by one row. This means every descending diagonal is constant, which is the defining property of a Toeplitz matrix.

Computational consequence

A Toeplitz matrix–vector product is a convolution and costs \(\mathcal{O}(N \log N)\) via the FFT, not \(\mathcal{O}(NM)\). This is what makes matrix-free seismic operators practical.

Deconvolution — Why It’s Ill-Posed

The wavelet \(w(t)\) is bandlimited, so:

- \(\mathbf{W}\) has rapidly decaying singular values

- Low and high-frequency reflectivity components are attenuated

- Inversion amplifies noise at those frequencies

Regularized deconvolution:

\[ \hat{\mathbf{r}} = \left(\mathbf{W}^\top\mathbf{W} + \lambda\,\mathbf{I}\right)^{-1}\mathbf{W}^\top\mathbf{d} \]

In the Fourier domain (circulant approximation):

\[ \hat{R}(f) = \frac{\overline{W}(f)}{|W(f)|^2 + \lambda}\,D(f) \]

This is the Wiener filter.

Numerical Examples in Julia

Set up matrix-free operators using JOLI.jl:

The adjoint W' is defined to satisfy \(\langle \mathbf{W}\mathbf{r}, \mathbf{d}\rangle = \langle \mathbf{r}, \mathbf{W}^\top\mathbf{d}\rangle\).

Julia — Solving with LSQR

The damp parameter corresponds to \(\sqrt{\lambda}\) in the Tikhonov formulation:

\[ \min_{\mathbf{r}} \;\; \|\mathbf{W}\mathbf{r} - \mathbf{d}\|_2^2 + \lambda\|\mathbf{r}\|_2^2 \]

Summary

Key concepts:

- Forward vs. inverse problems

- Least-squares & normal equations

- SVD and fundamental subspaces

- Pseudo-inverse — unifies all cases

- Regularization as bias–variance trade-off

Practical takeaways:

- Always assess the condition number

- Use matrix-free operators in large-scale problems

- Choose regularization via L-curve or discrepancy principle

- The Bayesian view gives a probabilistic interpretation of regularization

- Deconvolution illustrates all key ideas

References

- Aster, R.C., Borchers, B. & Thurber, C.H. Parameter Estimation and Inverse Problems. Academic Press.

- Menke, W. Geophysical Data Analysis: Discrete Inverse Theory. Academic Press.

- Tarantola, A. Inverse Problem Theory and Methods for Model Parameter Estimation. SIAM.

This lecture was prepared with the assistance of Claude (Anthropic) and validated by Felix J. Herrmann.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.